A 1-year update on my “Self-Taught” Machine Learning Path

You can find me on twitter @bhutanisanyam1

This blog post is to share with you an update about my “Machine Learning Journey”, 1 year down the path.

A few of my readers have been kind enough to also reach out and ask if the interview series has ended or the reason for not putting out more blog posts recently. This blog post will (hopefully) also share the reason for the same:

Earlier this year, I was invited by Google to interview for the 1 year Google AI Residency Program: My application was considered for the “final round” of interviews: The “onsite interviews”, the preparation process had kept me busy that made me contribute lesser to the blog series as well as the online communities.

Before I talk all about the AI Residency, I would like to share a few other updates:

Completing my Undergraduate Degree

During the past 4 years, I have been a CS student during the day from 9 to 5, and ML student/freelancer/blogger/community member/Meetup TA during the 5 to 9(s).

I’m happy to share my final year thesis with Rishi Bhalodia, under the guidance of Dr. C. N. Subalalitha: “Generating Music using Deep Learning and NLP” has been accepted (inspired by the work of Christine Mcleavy).

University has overall been a great experience for me, I got exposed to many amazing fields, found my love for “AI” and even managed to pursue a career in it and start a small business with Rishi (something I didn’t ever imagine). I’m excited about the next phase for me: Becoming a full-time ML student and continue growing in the field. The chance to stay away from the comfort of home was also a great learning and one of the defining moments for me, for the next phase I will be moving back to my hometown and continue working on my Machine Learning Journey.

I’d also take this chance to thank my guide, Dr. Subalalitha for believing in a project with training loops extending over days (We used a single GPU machine) as well as allowing me to prepare for the AI Residency interview which had happened during the same period.

(All details on the project and research work coming soon in a blog post, with full source code. To give you a teaser, we’re generating music using PyTorch and some fastai magic. For much better things, I’d suggest that you checkout MuseNet by Christine.)

For now, I’m told that I’m officially allowed to write a “CS Engineer” on my profile.

Data Science Network

I have always been a fan of giving back to the community, with DSNet we hope to create a welcoming community for Data Science beginners as well as people looking to grow in the field.

DSNet is a joint effort by some of the most active ML members of the Indian “AI” Community: Aakash N S, Siddhant Ujjain, Kartik Godawat as well myself. We would love to grow the community to an all-country one and beyond.

To share some of the stats as of today. DSNet has:

- >1k Active Slack Members

- >1.3k Newsletter Subscribers

- >4.5k Active Meetup Members

- (Upcoming) Weekly Paper Discussions

- (Upcoming) Weekly Kaggle Discussions

Come, join us if the above points sound interesting.

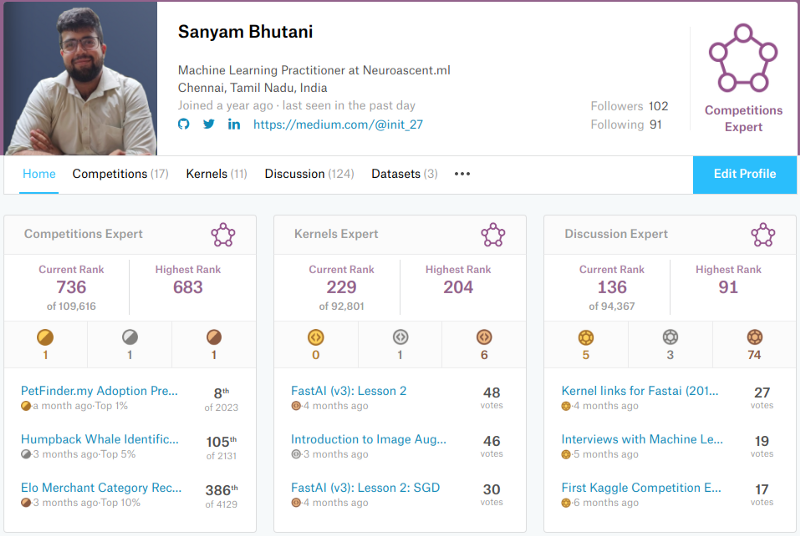

Kaggle Gold Finish, Getting Ranked as Top 1% across all categories

Kaggle is really the home of Data Science. I have been a long time fan of kaggle as well as kagglers. Many of the best have been kind enough to be a part of the interview series as well.

Earlier this year, I joined a team with Mohammad Shahebaz, Aditya Soni, Luca Massaron, Bac Nguyen Xua, Rishi Bhalodia for the KagglePetFinder.myAdoption Prediction Challenge.

Dreams do come true: Our team finished 8th in the comp, bringing the first competition category Gold to my profile. It had also put me in the Top 1% ranking across all divisions:

- Competitions

- Kernels

- Discussions

If you’re interested, here is the link to our solution: https://lnkd.in/fVGRFvJ

To be honest, all credit goes to my teammates who really were the brains behind the gold medal. PS: Our team name says “We Need A Fulltime Job”. We all are on the job market, DM(s) are open for all 🙂

My only regret is that due to the interview preparations, I chose not to get involved in competitions after Petfinder which was really hard considering how addictive kaggle is. Hopefully, now, I will get back on the leaderboards soon!

Interviewing for the Google AI Residency, 2019

Earlier this year I received an email that really changed my life. It was a dream come true moment for me when I received an update on my application.

I had been invited for the final round of interviews for THE GOOGLE AI RESIDENCY! It was really a moment to make my gurus from fast.ai proud! As I had mentioned in my journey series, I chose to opt out of college placements and chose to stick to the ML path and seek opportunities in this domain. This was really the dream opportunity that not just me, thousands of others could wish for.

The next few months were spent by me preparing for Data Structures and Coding questions, as well as reading a lot of research papers, doing fast.ai Part 2 (Coming out later in June this year).

The interview rounds consist of a Google Hangouts interview followed by an onsite interview. My “pre-screening” round was skipped and I was invited for the on-site interviews directly which consist of 2 rounds of interviews. I followed the following resources from the AI Residency FAQ:

- HackerRank’s 30 days of code challenge

- Data Structures and Algorithms in Python by Michael T. Goodrich (book)

- Cracking the Coding Interview by Gayle Laakmann McDowell (book)

- Chris Olah’s blog

- Ian Goodfellow’s Deep Learning textbook (Initial Chapters)

Along with these, I really brushed up on fastai part 1, actively participated in the “Live version of Part 2” (until the date of my interview, post which I had to allocate time to finalising my undergraduate project. Now that my school is over, I will be getting back on the fastai forums)

In spring, I finally got the opportunity to enter a Google Office in NYC.

I need to say this out loud:

It. was. amazing.

Google flew me out for the NYC interview, I also got to meet members from the current residency program as well as have lunch with a few researchers (Dear Google, Thank you for the nicest lunch I’ve ever had).

This was also a great opportunity for me to check out the “AI Scene” in NYC, my cousin Arkin Khosla a (then) student at NYU was also super kind enough to host me and talk about the “AI scene in ‘silicon alley’ ”.

I also got to meet one of my Machine Learning Heroes: Sylvain Gugger whom I wanted to thank in person for all great things at fastai and for the awesome work from him and Jeremy Howard. I also got the chance to talk about how the research and collaboration happen at fast.ai, how Sylvain works and Swift For Tensorflow (All details coming up in a blog post).

Unfortunately, I was informed this week that my application did not make through the process.

While I feel disheartened, I’m really grateful to Google for considering my application as well as wish the current AI Residents joining google best of luck for their amazing research.

Now that I did not have a backup plan or application to any other “residency program” active and the fact that I couldn’t be active in the TWiMLAI x Fastai Study group or the fastai forums itself, it gives me a huge boost to put a foot down.

Thanks to my subscribers who have pointed out and even reached out to me personally mentioning that the blog has been quiet, I can promise that I will be working on a few exciting upcoming posts (Regular and weekly, of course.) Please keep an eye out for the same. Thanks again for excusing my inconsistency- earlier due to the interview preparation and later from the emotional effect of the rejection.

An open letter of Thanks to fastai, Jeremy Howard, Rachel Thomas, Sylvain Gugger and the community

My original plan was to really thank publically fast.ai after making it to the residency so that I could really share with the community, “Hey! Look fastai helped me make it to my dream, Google!” and really thank them from a good position.

However, I wouldn’t lie about the fact that even getting shortlisted for the interview as well as all of the things I have achieved in my “AI” path is thanks to fast.ai:

- The (little) knowledge that I have gained about the field.

- Blog Writing: All thanks to the support from the great community as well as just following Rachel Thomas’s advice on blogging.

- Community building: I’m a huge fan of giving back, thanks to the motivation from fast.ai. I have been really involved with TWiMLAI Meetups. Rachel’s advice extends even to technical talks, This year I’ve targeted giving 100 hours to tech talks and I’ve already given ~50 hours of tech talks which has been a great learning experience. (I also want to express that the tech talks are something that I find really intimidating and I chose to work on it in order to become a better speaker)

- Kaggle:

Jeremy teaches approaches that apply very very well to Kaggle. Fastai has really been the starting point for me to kaggle.

This is an open letter of thanks to the fastai team for helping in my path to becoming a practitioner. All of my achievements in the field (all of the small ones) are all thanks to fast.ai, the course, the people behind it and the community: all of the amazing people that I met on the forums, interacted with and got the answers to my “stupid questions” from.

In the words of one my Machine Learning Heroes, Alexandre Cadrin-Chênevert:

We [fast.ai community] are like a big international family. The democratization of deep learning is an important social mission and we are all part of it.

Before ending this section, I would also want to thank all of the online communities that I have had the experience to participate in, learn from and even contribute to. Thank you for being patient with all of my questions and for your kind answers.

Finally, to the fastai team, Thank you!

PS: Even though I have done a LOT of online courses, fast.ai had the most impact in my journey. It’s the only course that I’d recommend for getting started and even getting to the cutting edge of Deep Learning.

What’s next for me?

For my learning path:

- Restart the overdue blog posts.

- Contribute more to DSNet, TWiMLAI.

- Aiming to become a Kaggle Master in the Competitions.

- Share 1 paper summary each week.

- Start a weekly archived Kaggle Comp review.

- (Soon) Contribute to DSNet YouTube channel, (Upcoming) Podcast.

Like always, I will keep sharing updates about my ML path via this blog. Thanks for reading!

You can find me on twitter @bhutanisanyam1

Subscribe to my Newsletter for updates on my new posts and interviews with My Machine Learning heroes and Chai Time Data Science